I have a database formatted as a table with various columns containing information. I would like to know the best method to extract information based on a given prompt. This could include counts, written information, or insights. I’m looking for the most effective search method to use.

I attempted to use Generative Search with Gemini alongside text2vec-transformers (paraphrase-multilingual-MiniLM-L12-v2) using grouped_task, but it is inaccurate. I tried using near_text with the query as well, but it didn’t help. The information provided does not match what is in my database. For example, if I ask, “What are the most common crimes in São Paulo?” it returns imprecise information due to the limit parameter, and each execution provides different results because the data within the limit changes. Sometimes, the model even hallucinates, stating that there is no information when there actually is.

Is there anything I can do to maintain data consistency and ensure it iterates through my entire database to provide accurate information?

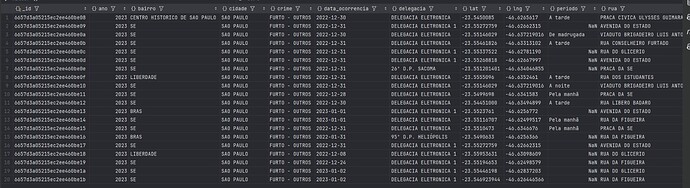

Below its my database in portuguese (migrated from MongoDB to Weaviate):

hi @thigarcias !

Welcome to our community

I am not sure your dataset will be able to provide a good context to answer this kind of question.

If it was, for example, an essay on all crimes, it could then extract something that potentially could answer the prompt.

Check here our recipes:

That can give you some insights as well as this page with some demos as use cases:

Let me know if this helps

Thank you for responding!

I wanted to ask you something else, how impactful can the embedding model be on the results of the prompts? As I mentioned, I am using the paraphrase-multilingual-MiniLM-L12-v2, but could it be affecting the information extraction? In my search for good models that support Portuguese, it was the first one I found and decided to use. Do you know of any others that you would recommend?

Hi! Brazilian here too

I have seen good results from Cohere Embed v3 as well as OpenAI

For other local/opensource models, you can also check ollama

Here a nice a article on that: OpenAI vs Open-Source Multilingual Embedding Models | by Yann-Aël Le Borgne | Towards Data Science

The model will have a direct impact on the vectors your get, based on the content you vectorize.

Let me know if this helps.

Thanks!